Rebuilding AKS Clusters

Re-building a cluster

Pre-requisites

- Ensure you have activated

PIM Azure Contributorrole via PIM for the subscription of the cluster you want to rebuild, and that you have permissions to delete the cluster in Azure Portal. - You will need to remove the resource locks via portal on the resource group if indicated by the corresponding azure pipeline. It can be best practice to do this before deleting the cluster, to avoid any potential issues with the pipeline.

- Make Dynatrace aware in

#dynatrace-help-adminssince this has a disruptive effect on their monitoring services, with advance notice they can create maintenance windows during the change. - Announce in the relevant slack channels

#cloud-native-announceand#sds-cloud-nativeseveral days before the change to give plenty of advance notice.

Common Steps and Pipeline Use

To re-build the cluster you need to run the pipeline in a given environment, and for the given cluster you deleted:

Click on Run pipeline (blue button) in top right of Azure DevOps. Ensure that Action is set to Plan first to see expected actions, Cluster is set to whichever one you want to build and that the environment is selected from the drop down menu.

Click on Run similar to above but now with Action set to Apply to start the pipeline and build.

Rebuilding a cluster

(If performing an AKS Upgrade)

- Merge PR to remove a cluster from Application Gateway, e.g remove SDS Prod 01.

- Check that the relevant Application Gateways backend pools correctly only point to 1 target instead of 2.

- Merge PR(s) to toggle

ACTIVE_CRON_CLUSTERvalue in the flux repo, e.g. SDS Prod 00 example 00 tofalseand 01 totrueif you are rebuilding 00 cluster, and vice versa if you are rebuilding 01 cluster. - Raise PR to upgrade the AKS version of the required cluster, see this example

- Run the ADO pipeline as mentioned above to see a plan for the env

- Delete the AKS cluster that was removed from the Application Gateway

- Merge the PR to upgrade the AKS version of the required cluster

- Run an apply of the deploy pipeline to rebuild the cluster

- Add the cluster back into Application Gateway once you have confirmed deployment has been successful, for example add SDS Prod 01

(If you just need to rebuild the cluster, without an AKS version upgrade)

- Run the ADO pipeline as mentioned above to build the AKS cluster as it’s defined in code

- Add the cluster back into Application Gateway once you have confirmed deployment has been successful, for example add SDS Prod 01

Environment Specific

Dev/Preview, AAT/Staging

- Change Jenkins to use the other cluster that is not going to be rebuilt

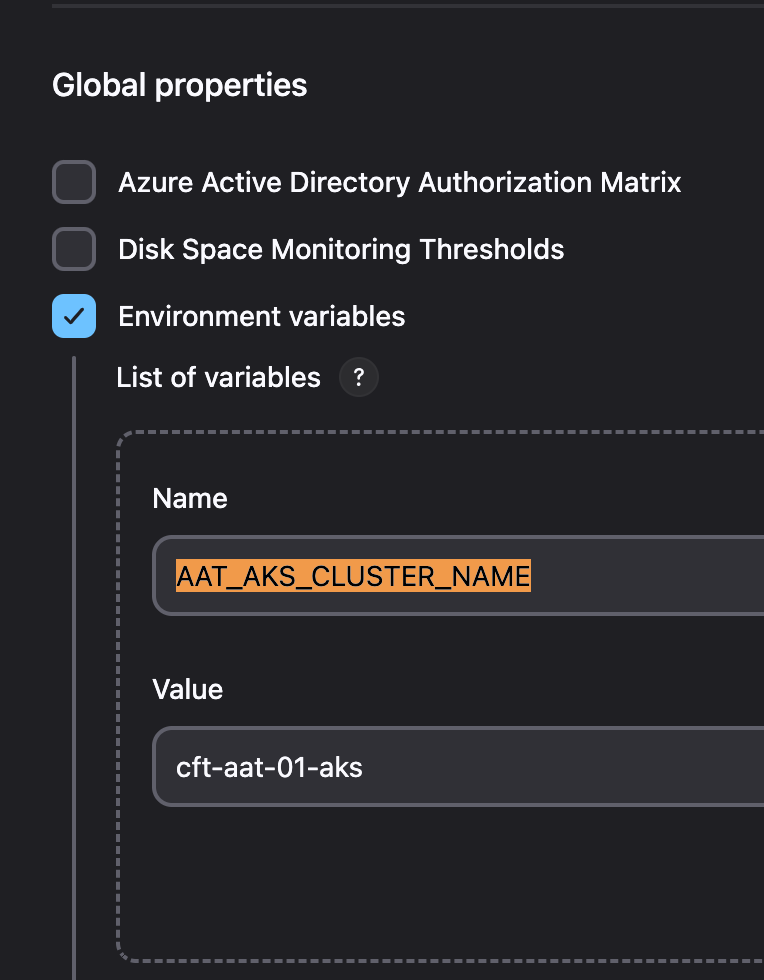

If the configuration doesn’t update after your pull request has been merged, you can change it manually in the Jenkins Configuration

You will find the same Environment variables for the PRs shown above in this configuration page:

After deployment of a cluster

- Change Jenkins to use the other cluster, e.g. cnp-flux-config#19886 .

- After merging above PR, IF Jenkins is not updated, check Here, you MUST manually change Jenkins config to use the other cluster.

- If it is a preview environment, swap external DNS active cluster, example PR

- If it is an AAT Environment, you will need to update the Private DNS record to point to the new cluster

- There are 2 locations for this change:

- The private DNS entries should be set to the kubernetes internal loadbalancer IP (Traefik).

- See common upgrade steps for how to retrieve the IP address"

- Create a test PR, normally we just make a README change to rpe-pdf-service.

- Example PR. Check the PR in Jenkins has successfully run through the stage AKS deploy - preview

- Send out comms on

#cloud-native-announceSlack channel regarding the swap over to the new preview cluster, see below example announcement:-

Hi all, Preview cluster has been swapped cft-preview-00-aks.

You can log in using:

az aks get-credentials --resource-group cft-preview-00-rg --name cft-preview-00-aks --subscription DCD-CFTAPPS-DEV --overwrite

- Delete all ingress on the old cluster to ensure external-dns deletes its existing records:

kubectl delete ingress --all-namespaces -l '!helm.toolkit.fluxcd.io/name,app.kubernetes.io/managed-by=Helm'

- Delete any orphan A records that external-dns might have missed:

- Replace X.X.X.X with the loadbalancer IP (kubernetes-internal) of the cluster you want to cleanup

Private DNS

az network private-dns record-set a list --zone-name service.core-compute-preview.internal -g core-infra-intsvc-rg --subscription DTS-CFTPTL-INTSVC --query "[?aRecords[0].ipv4Address=='X.X.X.X'].[name]" -o tsv | xargs -I {} -n 1 -P 8 az network private-dns record-set a delete --zone-name service.core-compute-preview.internal -g core-infra-intsvc-rg --subscription DTS-CFTPTL-INTSVC --yes --name {}

az network private-dns record-set a list --zone-name preview.platform.hmcts.net -g core-infra-intsvc-rg --subscription DTS-CFTPTL-INTSVC --query "[?aRecords[0].ipv4Address=='X.X.X.X'].[name]" -o tsv | xargs -I {} -n 1 -P 8 az network private-dns record-set a delete --zone-name preview.platform.hmcts.net -g core-infra-intsvc-rg --subscription DTS-CFTPTL-INTSVC --yes --name {}

Delete any TXT records pointing to inactive

# Private DNS

az network private-dns record-set txt list --zone-name preview.platform.hmcts.net -g core-infra-intsvc-rg --subscription DTS-CFTPTL-INTSVC --query "[?contains(txtRecords[0].value[0], 'inactive')].[name]" -o tsv | xargs -I {} -n 1 -P 8 az network private-dns record-set txt delete --zone-name preview.platform.hmcts.net -g core-infra-intsvc-rg --subscription DTS-CFTPTL-INTSVC --yes --name {}

# Public DNS

az network dns record-set txt list --zone-name preview.platform.hmcts.net -g reformmgmtrg --subscription Reform-CFT-Mgmt --query "[?contains(txtRecords[0].value[0], 'inactive')].[name]" -o tsv | xargs -I {} -n 1 -P 8 az network dns record-set txt delete --zone-name preview.platform.hmcts.net -g reformmgmtrg --subscription Reform-CFT-Mgmt --yes --name {}

Once the swap over is fully complete then you can delete the old cluster

- Comment out the inactive preview cluster.

- Example PR.

- Once the PR has been approved and merged run this pipeline

Perftest

Before deployment of a cluster

- Confirm that the environment is not being used with Nickin Sitaram before starting.

- Use slack channel pertest-cluster for communication.

- Scale the number of active nodes, increase by 5 nodes if removing a cluster. Reason for this CCD and IDAM will auto-scale the number of running pods when a cluster is taken out of service for a upgrade.

After deployment of a cluster

- Check all pods are deployed and running. Compare with pods status reference taken pre-deployment

PTL

Rebuilding PTL is very disruptive and must be done in a change request out of hours with enough time to fix issues as they arise. It is not in any HA setup, so causes complete downtime for Jenkins and all developer workflows, as well as ceasing all image automation across the relevant platform whilst it’s down.

Announce with plenty of advance notice, and coordinate with upcoming releases to minimise disruption and risk.

It follows this subset of the steps, since there is no Application Gateway in front of the cluster.

- Raise PR to upgrade the AKS version of the required cluster, see this example

- Delete the AKS cluster that was removed from the Application Gateway

- Merge the PR to upgrade the AKS version of the required cluster

- Run the ADO deploy pipeline as mentioned above

Known issues

Diagnostic Settings already exist

If you have to re-run a failed stage for some reason you may see this error in the pipeline, they can simply be deleted from the portal and will be re-added by your terraform run.

Bootstrap fails with error

ERROR: Unable to install flux. Most likely needs a re-run of the Bootstrap stage if the error before this is related to cert-manager pod not being ready in time.

Neuvector

admission webhook "neuvector-validating-admission-webhook.neuvector.svc" denied the request:, these alerts can be seen onaks-neuvector-<env>slack channels- This happens when neuvector is broken.

- Check events and status of neuvector helm release.

- Delete Neuvector Helm release to see if it comes back fine.

- Neuvector fails to install.

- Check if all enforcers come up in time, they could fail to come if nodes are full.

- If they keep failing with race conditions, it could be due to backups being corrupt.

- Usually

policy.backupandadmission_control.backupare the ones you need to delete from Azure file share if they are corrupt.

Dynatrace oneagent pods not deployed or failing to start

NOTE: The issue described here need to be validated if still applies when the updated Dynatrace Operator is rolled out.

For a rebuild or newly deployed cluster, Dynatrace oneagent pods are either not deployed by Flux or where deployed, fails with a CrashLoopBackOff status.

Dynatrace Helm Chart requires CRDs to be applied before installing the chart. The CRDs currently need to be manually applied as they are not part of the existing Flux config.

Run the below command on the cluster. An empty result confirms CRDs are not installed.

kubectl get crds | grep oneagent

To fix, run the below command to apply CRDs to the cluster:

kubectl apply -f https://github.com/Dynatrace/dynatrace-oneagent-operator/releases/latest/download/dynatrace.com_oneagents.yaml

kubectl apply -f https://github.com/Dynatrace/dynatrace-oneagent-operator/releases/latest/download/dynatrace.com_oneagentapms.yaml

Traefik HelmRelease stuck in Unknown status

When check the traefik2 HelmRelease, it is stuck in Unknown status with the below error:

2026-02-20T14:42:20.743329203Z: beginning wait for 7 resources with timeout of 5m0s

2026-02-20T14:42:20.75259956Z: Service does not have load balancer ingress IP address: admin/traefik

2026-02-20T14:47:18.753667495Z: Service does not have load balancer ingress IP address: admin/traefik (148 duplicate lines omitted)

This is due to Traefik service not having an ingress IP address assigned, somehow the IP address is assigned to a node.

To fix this, you can uninstall the traefik2 HR, identify the node, remove the node from the cluster, and then install the HelmRelease.

# find out the LoadBalancer 'traefik' IP addresses

kubectl get svc -A | grep LoadBalancer

# see a list of nodes in the cluster and their internal IP addresses

kubectl get nodes -o wide

# to uninstall the traefik2 HR

helm uninstall traefik2 -n admin

# to delete the node with the IP address assigned to the LoadBalancer service

kubectl delete node <node-name>